The Real Problem with AI Isn’t the Models – It’s Everything Else!

Artificial intelligence is not the constraint most organisations think it is.

The models exist. The tools are widely available. The investment is already being made.

And yet, many organisations are still struggling to get consistent, reliable value from AI.

This is usually interpreted as a technology problem. It isn’t.

We Don’t Have an AI Problem. We Have an Integration Problem

If you speak to people actually trying to use AI in day-to-day operations, a pattern emerges fairly quickly:

- pilots that never quite scale

- outputs that are occasionally useful, but not consistently

- systems that don’t quite connect properly

- a general sense that “it works… but we don’t fully trust it”

Gartner has been noting for some time that a large proportion of AI initiatives fail to move beyond proof of concept. The common explanation is often maturity.

A more accurate explanation is integration.

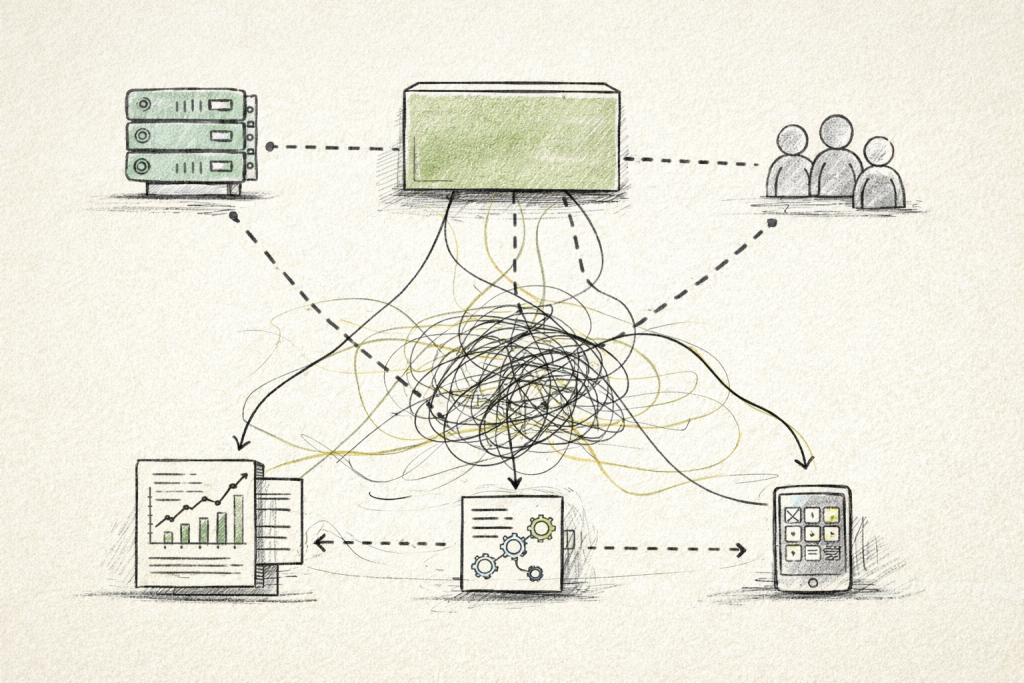

AI doesn’t sit neatly in one place. It depends on:

- the data it receives

- the systems it connects to

- the processes it feeds into

- and the people expected to act on its outputs

If those elements are not aligned, the model becomes the least interesting part of the problem.

AI placed on top of fragmented systems rarely produces reliable outcomes.

Data Isn’t the Bottleneck – Usable Data Is

There is a tendency to assume that organisations need more data. Most don’t.

Most organisations already have plenty of data. What they lack is data that is:

- consistent

- well-structured

- clearly defined

- and actually usable across systems

McKinsey has pointed out that organisations capture only a fraction of the value from their data, largely because it is fragmented and poorly integrated.

In practice, this looks like:

- different departments using different definitions for the same thing

- systems that don’t quite agree with each other

- records that are technically complete but practically unhelpful

AI systems are then asked to operate on top of this.

The result is not especially surprising:

outputs that are technically correct in places, but difficult to rely on overall.

Trust Is the Missing Layer

Even when the data and models are in place, something else tends to get in the way.

Trust.

Not in the abstract sense, but in a very practical one:

- Can we explain where this output came from?

- Can we see what data it used?

- Will it behave the same way tomorrow?

- What happens if it’s wrong?

The National Institute of Standards and Technology (NIST) has formalised this quite neatly in its AI Risk Management Framework, where trustworthiness is treated as a core requirement rather than an optional extra.

In reality, many organisations are still working this out after deployment.

Which is usually the more expensive way of doing it.

Trust needs to be built in from the start:

- clear data provenance

- transparent processing

- consistent behaviour under changing conditions

- and governance that people can actually follow

Without that, adoption tends to slow down quietly rather than fail loudly.

Integration as the Actual Innovation

There is a lot of focus on new models, new tools, and new capabilities. Which is understandable.

But in many cases, the real challenge is less exciting and more useful:

getting existing systems, data, and processes to work together properly.

This includes:

- how data moves between systems

- how AI fits into existing workflows

- how decisions are validated

- and how everything behaves outside of ideal conditions

This is not always described as innovation.

But it is usually where the value is.

From Pilots to Something That Actually Works

Most organisations are no longer asking whether they should use AI.

They are asking why it isn’t delivering what they expected.

The answer is rarely “the model isn’t good enough”

It is more often:

- the data isn’t ready

- the systems aren’t aligned

- the process isn’t defined

- or nobody is entirely sure who is responsible

Moving beyond this requires a shift in focus from experimenting with tools to structuring the environment those tools operate in.

This is where approaches such as structured data management, governance frameworks, and clear integration patterns become more important than the choice of model.

A Slightly Better Question

Instead of asking

“What can AI do for us?”

ask:

“What needs to be in place for this to work properly here?”

It’s a less exciting question. But it tends to produce better outcomes.

Where We Tend to Work

At KnowNow Information, most of our work sits in this less glamorous part of the problem.

That includes:

- helping organisations structure and manage their data so it can actually be used

- integrating systems so information flows in a sensible way

- and putting in place the governance needed so that AI outputs can be trusted

In practice, this often starts with relatively simple questions:

- What data do we actually have?

- Where does it come from?

- Who is responsible for it?

- And how is it being used?

Tools such as our Data Management Canvas are useful here, not as a theoretical exercise, but as a way of making these questions visible and structured early on.

Because once those fundamentals are clear, the conversation about AI tends to become much more practical.

Whether it’s smart infrastructure, digital place projects, or more traditional enterprise environments, the pattern is generally the same:

the organisations that focus on integration and data tend to get more value from AI than those that focus on the models alone.

This is not especially surprising once you see it a few times.

Conclusion

AI is not failing because the technology isn’t good enough.

It is failing because the surrounding systems, data, integration, and governance, have not kept up.

Organisations that recognise this tend to make quieter, more practical progress.

Not by adopting more AI.

But by making better use of what they already have.

DP.

References

- Gartner (2023–2025), AI Adoption and Maturity Research

- McKinsey Global Institute (2021–2024), The State of AI

- NIST (2023), AI Risk Management Framework (AI RMF 1.0)

- IDC (2024), Worldwide Artificial Intelligence Spending Guide

0 Comments